Is There Design in Nature?

Is There Design in Nature?

By Neal Kendall

(Presented at the 2016 Science Symposium)

Table of Contents

- 1 Introduction

- 1.1 Author Notes

- 2 Complex Specified Information (CSI)

- 3 Causation

- 3.1 Material vs Teleological (“Agency” ) Causation

- 3.1.1 Material Causation

- 3.1.2 Agency “Intelligent” Causation

- 3.2 Limitations of Material Causation

- 3.3 Is the Universe Causally Closed?

- 3.1 Material vs Teleological (“Agency” ) Causation

- 4 Complexity of Life

- 4.1 Life Primer

- 4.1.1 DNA Polymerase

- 4.1.2 DNA Helicase

- 4.1.3 RNA Transcription – Make RNA from DNA - RNA Polymerize

- 4.1.4 RNA Splicing – Making RNA Transcripts - Spliceosome

- 4.1.5 Ribosome Translation – Make Proteins from RNA

- 4.1.6 Bacteria Flagellum

- 4.1.7 Eye

- 4.2 Protein Sampling Problem

- 4.3 “Codes”

- 4.3.1 DNA Transcription-Translation Code

- 4.3.2 Epi-Genetic Code

- 4.3.3 Sugar Code

- 4.3.4 Membrane Code

- 4.3.5 “Endogenous” Electric Code

- 4.3.6 Summary of Codes

- 4.4 Summary – Complexity of Life

- 4.1 Life Primer

- 5 Origin of Life (Abiogenesis)

- 5.1 RNA World

- 5.2 Calculations of Probabilities on the Origin of Life

- 6 Neo-Darwinism

- 6.1 What is Neo-Darwinism

- 6.2 Current Status of Neo-Darwinism

- 7 Are Materialist Explanations for the Evolution of Life Valid?

- 7.1 Sudden Appearance of Complex Biologic Features

- 7.1.1 Fossil Record

- 7.1.2 Mechanisms of Rapid Evolutionary Change

- 7.1.3 De Novo Genes

- 7.1.4 Computer Simulations

- 7.2 Non-Randomness of Evolutionary Change

- 7.2.1 Epigenetics

- 7.2.2 Natural Genetic Engineering - Transposons

- 7.2.3 Horizontal Gene Transfer

- 7.2.4 Overlapping Codes

- 7.2.5 Edge of Evolution – Manyuan Long vs Doug Axe & Michael Behe

- 7.1 Sudden Appearance of Complex Biologic Features

- 8 Convergent Evolution

- 8.1 Convergences at the Organism Level

- 8.2 Convergences at the Organ or Tissue Level

- 8.3 Convergences at the molecular Level

- 9 Summary of Neo-Darwinian Evolution

- 9.1 Trends - Non-Randomness, Saltation

- 9.2 Visualizing Darwinian Evolution

- 9.3 God of the Gaps

- 9.4 Intelligent Design

- 9.5 Where is the Debate Heading?

- 10 Vitalism

- 10.1 What Has to Be Explained?

- 10.2 Why was Vitalism Dismissed?

- 10.3 Cell Intelligence

- 10.4 “Self Organization”

- 10.5 Molecular Location in the Cell

- 10.5.1 DNA Replication

- 10.5.2 RNA Transcription

- 10.5.3 RNA Splicing

- 10.5.4 mRNA Translation (Protein Synthesis)

- 10.6 Evolution

- 10.7 Summary - Vitalism

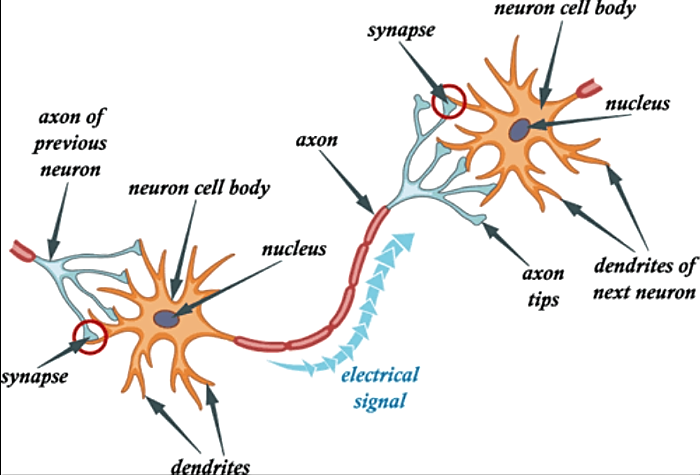

- 11 Emergence of Consciousness and Mind

- 11.1 A Brief Review of Current Neuroscience

- 11.2 Consciousness

- 11.2.1 Qualia - Perception (“The Hard Problem” )

- 11.2.2 Intentionality

- 11.3 Theories of Mind

- 11.3.1 Dualism

- 11.3.2 Epiphenomenalism

- 11.3.3 Eliminative Materialism

- 11.3.4 Type Physicalism or Identity Theory

- 11.3.5 Functionalism

- 11.4 Property Dualism – Emergence

- 11.4.1 Emergent Mind - In Relation to Theism / Atheism

- 11.4.2 Computational Theory 103

- 11.4.3 Problems with the Emergent Theory of Mind

- 11.5 Summary – Emergence of Consciousness and Mind

- 12 Falsifications of Materialism

- 12.1 Falsification of Materialism #1: Dreams

- 12.1.1 Terminology

- 12.1.2 Attributes of Dreams

- 12.1.3 Emulation of the Senses

- 12.1.4 What a Materialist Needs to Explain

- 12.1.5 Possible Materialist Explanations

- 12.1.6 Falsification Using Probabilities of Complex Specified Information....126

- 12.1.7 Near Death Experiences, End of Life Experiences, DMT and other “Hallucinations”

- 12.1.8 Summary - Dreams

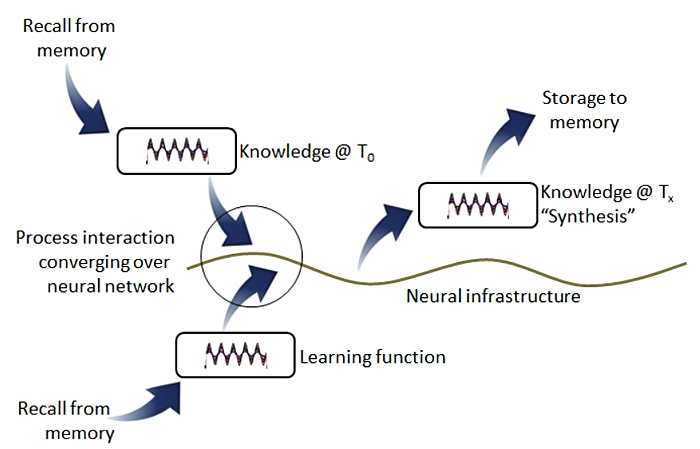

- 12.2 Falsification of Materialism #2: Continuity of Thought

- 12.2.1 Materialism’s Claims

- 12.2.2 Complex Specified Information

- 12.2.3 Foreknowledge

- 12.2.4 Continuity of Thought and Free Will

- 12.2.5 Probabilities

- 12.2.6 Neo-Darwinism

- 12.2.7 Continuity of Thought - Summary

- 12.3 Falsification of Materialism #3: Constancy and Resumption of Self

- 12.3.1 Materialist Claims

- 12.3.2 Near Death Experiences

- 12.3.3 Summary – Continuity and Resumption of Self

- 12.4 Summary – Falsifications of Materialism

- 12.1 Falsification of Materialism #1: Dreams

- 13 Are Materialist Objections to Substance Dualism Valid?

- 13.1 Causal Closure of the Universe

- 13.2 Correlations

- 13.2.1 Man with Almost no Brain

- 13.2.2 Girl with Half Brain Removed

- 13.2.3 Persistent Vegetative State

- 13.2.4 Persinger and God Helmut

- 13.2.5 Callosotomy

- 13.3 Libet-Type Experiments and Free Will

- 13.4 Non-Local Consciousness

- 13.4.1 Consciousness and Quantum Physics

- 13.4.2 Radio Receiver Model

- 13.5 Summary

- 14 Mystical Experiences and Hallucinations

- 14.1 Near Death Experiences

- 14.1.1 Out-Of-Body

- 14.1.2 Tunnel and Light, Deceased Relatives, Divine Beings

- 14.1.3 Life Review

- 14.1.4 A Barrier

- 14.1.5 Ineffable Content

- 14.1.6 Time

- 14.1.7 Changed Lives

- 14.1.8 Notable Near Death Experience Cases

- 14.2 Neuroscientists Views on Near Death Experiences

- 14.2.1 Psychological Factors

- 14.2.2 N-Dimethyltryptamine (DMT)

- 14.2.3 Demon Haunted World – Alien Abduction Experiences

- 14.3 Deathbed Visions

- 14.4 After Death Communication

- 14.5 Induced After Death Communication

- 14.6 Summary – Mystical Experiences and Hallucinations

- 14.1 Near Death Experiences

- 15 What Are These Mystical Experiences?

- 15.1 Out-of-Body Experiences

- 15.1.1 Dissociation – Out-of-Body

- 15.1.2 Near Death Experience – Out-of-Body

- 15.2 The AWARE Near Death Experience Study

- 15.3 Are Materialist Explanations of Near Death Experiences Reasonable?

- 15.3.1 Near Death Experience – Life Review

- 15.4 Summary – What are These Mystical Experiences

- 15.1 Out-of-Body Experiences

- 16 The Power of Love

- 16.1 Shared Death Experiences

- 16.2 Shared Induced After Death Communication

- 16.3 Summary – The Power of Love

- 17 Summary

- 17.1 Creative Complex Specified Information Flow

- 17.2 Is Materialism Waning?

- 17.3 Cultural War - Materialism vs Idealism

1 Introduction

This paper is a broad brush approach to the question as to whether there is intelligent design in nature—whether nature exhibits the signature of teleology. Design, on the one hand and strictly material causes on the other, constitute a binary proposition. By law of the excluded middle one can infer design if material causes are deemed insufficient to account for what we see in nature. Either intelligence is a necessary causation for the splendid complexity nature reveals, or it isn’t. Claiming that there is some contingency in nature is not a nullification of the design argument.

Materialists claim that natural processes can produce all cases of “apparent design” exhibited by nature. Teleologists claim that there are at least some attributes of nature that will forever elude purely natural causative explanations. The statements in The Urantia Book pertaining to living organisms and the human mind are without question supportive of the notion that intelligent purpose is necessary and that material processes are insufficient to account for complexity of living organisms.

The Urantia midwayers have assembled over fifty thousand facts of physics and chemistry which they deem to be incompatible with the laws of accidental chance, and which they contend unmistakably demonstrate the presence of intelligent purpose in the material creation. [58:2.3] (P/ 665)

The approach I will take in this paper will be to show that material causes are insufficient to account for the repeated and rapid appearance of what I will referred to as “Complex Specified Information” (CSI) (which I will define in detail below). The paper will focus on two broad categories of phenomenon to infer design: 1) Complex specified information exhibited by living organisms—the origin, evolution and basic operation of living organisms, and 2) Complex Specified Information exhibited by human consciousness, thought, imagination, mystical experiences and even hallucinations. The paper will depart from these themes a bit in the final section to discuss a positive approach to detecting design through the power of love as it relates to mystical experiences.

I will show that the vast amounts of complex specified information that nature exhibits precludes any theory that limits itself to strictly material causes. The strongest case against materialism relates to the qualities of human consciousness and mind. These involve dreams, thought streams, mystical experiences and hallucinations. For the mystical experiences to count as powerful evidence against materialism it is not necessary that you accept them as genuine revelations. It is only necessary that you accept that the personal accounts about the audible and visual experiences are as they are described.

I will offer three (3) “falsifications of materialism,” which are accessible to anyone with just a bit of reflection. These falsifications of materialism reveal levels of complex specified information that is quantifiable and intuitive. I believe these falsifications of materialism are unassailable. In fact, I have presented them on a couple well-known atheist forums as well as the primary intelligent design forum, where many leading atheists participate, and no one has been able to offer any substantive contravening evidence to any of them.

The paper will not cover the origin of the universe or the so called fine-tuning of the universe physical parameters. I will comment briefly here: Materialists scientists would have us believe that the universe sprang forth from nothing. When the arguments are looked at closely however, one quickly realizes that “nothing” is actually not nothing, but something. Frankly, I have not examined the Big Bang theory much at all. My hunch is that much like evolutionary science and neuroscience, theory far out paces the evidence. It strikes me as hubris for scientists to think they know what was really going on in the first few instances of the universe 15 billion years ago. After all, we can’t even figure out what happened to the Lindberg baby 80 years ago.

The fine tuning argument, the centerpiece of theistic evolutionist’s claim for design, is under assault as well from materialist scientists who holds that the “many universes” model is a perfectly viable scientific theory. The many universes model extends the probabilities to infinity—whatever is needed to make them work—to remove the notion that the universe seems to be “balanced on the edge of a knife” as physicist Paul Davies once put it.

1.1 Author Notes

This paper really has to be read on-line and not printed. There are many links to videos and debates. Reading on-line also saves trees.

This is a very much a work in progress. It is really little more than a robust table of contents. There is so much more that could be said on each topic. I pulled this together over about a nine month period while working in a demanding career, raising two teenage girls, a wife, and

attending to two needy dogs and a cat. It was a great challenge in multiplexing. That said, I did not have anyone editing or reviewing the paper which is as close to a book as it is an article. I have discovered over the years I am not a great editor or proofreader of my own work. So I apologize in advance for missing words, redundancy, flipped phraseology, etc.

I am by nature a skeptical person and my skepticism runs in all directions. Given that, I can say that there is no person or no single book that I can put all my faith in. Having said that, there is nothing in The Urantia Book related to the life sciences and human consciousness and mind that I have discovered to be in error. Quite the contrary; in all cases, what I have discovered is that the statements in The Urantia Book are very reasonable and are in fact being confirmed by the evidence as I understand it, despite what the vast majority of scientists might claim. The lone exception to this is vitalism which is the most profound misalignment between the accepted theories in the life sciences and the statements in The Urantia Book. However, as I discuss in the paper, the issue of vitalism has been misunderstood and its dismissal among virtually every scientist in the world is premature. In fact, I believe there is a lot of evidence that vitalism is true.

I am not a scientist, nor am I an academically trained philosopher. I am a hobbyist in these areas. It is best to think of this as a reporting of recent scientific research that bears on the questions of design in the universe. However don’t discount the role of a reporter. Reporters, like industry analysts for example, often—usually—have a better perspective than those down in the trenches of a scientific of technology endeavor about what is really going on. If you wanted to really know what was going on in a particular industry, i.e. what direction an industry is heading, what technological changes might be taking place that effect products, my contention would be that you would gain greater insight by consulting an industry analyst whose role it is to assess the direction of an industry for financial purposes, than you would be by consulting an engineer busily writing code to develop a particular product for that industry. Over time, I have come to view my lack of formal academic training in science and philosophy as an asset and not a liability. It frees the mind up by avoiding the “echo chamber” given the unanimity of thought that plagues the universities.

With that, let’s get started.

2 Complex Specified Information (CSI)

To understand the primary purpose of this paper it is necessary to understand the concept of “Complex Specified Information” (CSI).The concept was introduced by Philosopher, Mathematician and Theologian William Dembski a leading Intelligent Design theorist in his book, The Design Inference. It is a bit of a clumsy term but I don’t know what else to use. Sometimes I will make the term even more clumsy by adding the word “creative” in front of it. Complex specified information can best be understood as complex structures, functions, processes or systems that produce function or meaning or achieve something important. That complex information achieves something, is most commonly assessed by whether it conforms to an independently given pattern.

Let’s take a closer look at each word in the phrase.

2.1 Definition

“Complex(ity)” involves the intricate arrangement of many components. Components can be physical things such as molecules or building materials or people or components or they can be abstract things such as language or thoughts or mental images or numbers or human actions.

“Specified” means that these components in a complex system are arranged very specifically and that there is little or no tolerance for perturbation of the system in order to maintain its structure or function or what it achieves—that is to say, CSI systems are highly constrained.

“Information” as defined initially by Claude Shannon was the flow of something down a communication channel. It can perhaps best be thought of as a “ruling out” of alternatives as the serial unveiling of a string of characters, for example, rules out a large set of alternative possibilities. In this way, the string of characters has greater meaning—greater information—with each word disclosed in the string.

Complex specified information systems display a high degree of intricacy which means they have a lot of dependencies and interdependencies between components. This is why they have many constraints. Any modification of the complex specified information arrangement or system is likely to break it, i.e. be ruinous to its meaning or function or whatever it achieves.

Because CSI systems have many components and interdependencies and constraints, they are highly improbable. The improbability arises by virtue of the relatively small set of arrangements that a complex specified information system could assumed in order to achieve something, compared to the much larger set of possible arrangements that this set of components could have assume that achieve nothing and that does not correspond to any independently known pattern.

2.2 Complex Specified Information vs Randomness

It is important to emphasize that having the attribute of improbability is not, in and of itself, sufficient to define a complex specified information system. Complexity can be confused with disorder—chaos. Disordered systems have some complexity in that they are, or could be, many components and the arrangement of the many components could be highly improbable. Given two strings: 1) a string of 1000 human text characters that yield no understanding, or 2) a string of 1000 human text characters of a Shakespeare Sonnet, without knowing the origin of either string, how could one assess the relative probabilities of each? Consider the following two character strings:

Plsudfirufknvjqveiuyeoirirqdvbfoeywendckndsapokfhqiurehfpiarfoka…

As from my soul which in thy breast doth lie: That is my home of love…

Because complex specified information systems are improbable and achieve something (usually by virtue of corresponding to some known pattern), they can be differentiated from a disordered system. Clearly in the two example strings of characters above, the second string achieves something and there would be a strong inference of design despite the fact that had they been generated randomly, the probability of each occurring would be the same.

Despite the obviousness of this, disorder and complexity are commonly confused and in fact, when debating in forums you can count on a materialist-atheist confusing the two. There is an entire cottage industry of these confused individuals who seek to convince people that the poor probabilities, especially as they relate to evolution, are manageable. A materialist will claim that an unlikely sequence of things is simply an illusion because any string of characters or DNA base pairs for example, has the same probabilities regardless of whether they render any meaning. And this is true.

The first obvious problem with this view point is that by this type of reasoning, anything, no matter how seemingly improbable, is not only possible but probable. Suppose you were to put an antenna out in the middle of the solar system and captured a signal. When you decoded the signal with the ASCII character set you found that the text was word for word the text of Genesis. Clearly, in that case, you would know that the signal was from an intelligent source. You would know this because it conforms to a pre-specified pattern. When you present the case this way to an adversary in debate, they quietly disappear from the forum.

2.3 Complex Specified Information vs Order

Complexity is also often confused with “order.” Ordered arrangements or systems are not the same as complex systems although ordered systems have some degree of complexity by virtue of their specificity. To be an ordered system, every component must be what it is for the order—the pattern—to be sustained. Therefore it is highly specific. But the ordered arrangement is deterministic whereas the complex specified information arrangement or system is not deterministic; it is “creative.”

One way to distinguish between order and complexity is to reflect on what would be required to create an ordered arrangement of components on the one hand vs a complex arrangement on the other. Let’s say you want to explain to someone how to produce a particular arrangement of human text characters. Or alternatively explain how to write a computer program written in a low level programming language to produce a particular arrangement of human text characters. What would the instruction set look like for producing an ordered arrangement vs the instruction set for creating the complex system? Would the instruction set in each case involve many instructions or would it involve few instructions? Let’s take two character strings:

abbcccdddd…yyyyyyyyyyyyyyyyyyyyyyyyzzzzzzzzzzzzzzzzzzzzzzzzzzzzz…

As from my soul which in thy breast doth lie: That is my home of love…

While both strings are equally improbably were they to be generated randomly, the instruction set in computer machine level programming code for the ordered pattern would be quite brief because it is deterministic and therefore can be produced by an algorithm. It would require a database of letters and then incrementing a counter and grabbing the letter associated with the counter and printing the character the proper number of times. As the number of characters is increased, the complexity of the code does not increase much at all.

The code for something meaningful in human text (such as a Shakespeare Sonnet) would require each character to be entered in the code. As the string gets longer, for example to produce a short story, the complexity of the program used to produce a short story, increases linearly. Whereas you really couldn’t produce a program to write poetry in any reasonable time frame; you could easily write a program to generate any ordered character string. Materialists often confuse order with complexity and they claim from this confusion that since order can be produced by nature, nature can produce complexity. In fact even well-educated and seasoned atheists often confuse order and complexity.

Despite the fact that an ordered string and a creative string are equally improbable to be produced by chance, the instruction set required to produce each string reveals the greater complexity of the Sonnet or story. Furthermore, the Sonnet is more meaningful in that it conforms to a known pattern. A Sonnet is creative; the ordered string is deterministic in that it can be created using an algorithm.

2.4 Quantifying Complex Specified Information

Complex specified information can be quantified but it is difficult to quantify complexity in many cases. The easiest way to quantify complexity is to distill a complex system down to a human character description. Let’s say there are two widgets A & B that you are describing to an agreed upon level of detail. Widget A requires 1000 human text characters to describe and the Widget B requires 5000 human text characters to describe. Clearly the second widget is more complex.

Since a widget could be described in many different ways, establishing an absolute measure of complexity is next to impossible. But when comparing like things, a relative quantification of complexity can be done as follows: The relative complexity of Widget A is 261000. The relative complexity of Widget B is 265000. It is quite a bit more involved than that but as a rough comparison I think that makes the point.

Actually the convention is to represent information quantity in binary form. To do this you could convert the text characters to their binary ASCII equivalent (ASCII is a code computers use to map binary digits to a human text character set) which assigns 8 binary bits to each character. In that case, the quantity for Widget A would be 28000. As I mentioned though, there are many ways to describe something in human text; therefore the real quantity of complex specified information would be considerably less than that.

Here are some examples of complexity: A house of cards is a complex system. The more cards stacked, the higher the complexity assuming the structure involved is equally sensitive to perturbation, i.e. equally constrained. A small house of cards can be more complex than a somewhat larger house of cards if the small house has far greater interdependencies and constraints.

Planning a wedding reception for 150 is far more complex than planning a luncheon for 12. Aside from the larger group, there are many dependencies and constraints—flowers, music, seating arrangements, food selection, etc. in a wedding than a luncheon not to mention having to deal with a “bridezilla.” Building a house is complex; a two story house is more complex than a single story house all other things being equal.

Writing a story or a poem is a complex endeavor. A poem would be more complex than a story of the same length because of the constraints related to writing poetry imposed by rhythm, rhyme, metaphor, etc. Writing a song would be more complex than writing a poem of the same quality because now you have introduced music with a melody, cords and a bass line. And of course living systems exhibit vast quantities of complex specified information. So does human intellect.

To summarize, complex specified systems have many components, have many dependencies as well as interdependencies, are highly constrained and therefore are highly improbable. Complex specified information systems achieve something—they conform to some known pattern. And they are non-deterministic.

3 Causation

Philosophy can be divided into two main categories: Idealism and Materialism. Idealism in brief, asserts that mind is the ultimate foundation of all reality. That is not to say that material reality does not exist (although some make the claim that it is an illusion), just that mind is the fundamental reality. Materialism, or more properly physicalism, is the theory that everything is physical, that there is nothing beyond the physical. Physicalism and materialism are often used interchangeably. Physicalism includes energy and the physical laws that nature abides by. Nevertheless, I will simply use the term “materialism” throughout.

Materialism is the most common form of monism which is the view that reality is composed of just one type of substance. For materialists that substance is matter. The other main category of monism, which is uncommon in Western thought, claims that mind is the fundamental universe “substance” and that matter is illusory.

For a modern scientific materialist there is no other substance beyond matter and energy. This means that there is no such thing as an immaterial mind, for example, or a vital force that infuses life with its marvelous qualities and even further that particles themselves are not endowed with some quality of mind—panpyschism.

3.1 Material vs Teleological (“Agency” ) Causation

One of the fundamental differences between the claims of materialism vs idealism pertains to the nature of causation. There are two general categories of causation that I want to define: 1) material causation and 2) agency or “intelligent” causation.

The claim made in this paper is that material causation cannot account for the creative complex specified information that we observe in nature whether occurring in life organisms, in our subjective experience, in the behavior of others as we observe them. Therefore an inference of design—teleology can be made.

3.1.1 Material Causation

Material causation is lawful and deterministic. Mathematics is used to describe nature because nature (the “physical sciences” anyway) conform to laws. However, there are two types of randomness that are involved in material causes as well despite the otherwise exclusively deterministic nature of material cause. There is randomness in the epistemological sense in that, although a cause may be deterministic, we cannot understand its true nature due to technological limitations. Particles can diffuse through water and seem to act randomly. But these particles are really behaving according to physical law, but we are limited in our ability to completely understand it.

The other type of randomness pertains to quantum physics. The randomness related to quantum physics is often said to be “ontological randomness” which is an academic way of saying that there is an inherent randomness in nature at the particle level. I discuss quantum physics a bit more further down in this section.

3.1.2 Agency “Intelligent” Causation

Agency causation is causation involving mind which is commonly understood to be immaterial. I suggest that agency causation, intelligent causation and teleology are synonymous. Idealists, of course, claim that agency causation is the primary causation especially causation related to what we would call creativity. Mind—immaterial mind—an idealist would say, is the true cause of creative complex specified information.

Materialists will sometimes use the term agency as well. But when a materialist uses the term agency they of course do not mean that there is an immaterial mind at work. Rather, they mean that nature has acquired a secondary level cause—a system—that, although deterministic, exhibits the characteristics of minded agency. In other words these material systems might be said to “emulate” true agency. These “systems” are algorithmic. Algorithms are a carrying out of a pre-arranged plan in a deterministic way.

The fundamental difference between material agency and a true minded agency is that true agency can be creative—they can readily create complex specified information. Materialists claim that material cause can produce complex specified information by, in effect, emulating an agent, but acknowledge that such creativity is ultimately the result of randomness and determinism.

3.2 Limitations of Material Causation

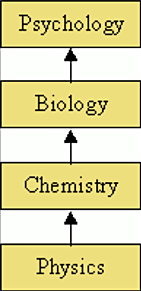

Materialists view reality—nature—as a hierarchy of increasingly complex layers. At the lowest level of the material hierarchy are material particles described by physics, particles combine to form elements and molecules which are studied by chemists, molecules combine and produce life which is the domain of biology, and living entities—cells—can combine in ways to produce mind (understood to be entirely material) which is the domain of psychology and finally, the minds of humanity are collectively studied by sociology. Most materialists believe that it is the lower levels that completely determine the attributes of the higher level. This is called “reductionism.”

Some materialists believe that the lower levels of material reality do not fully determine the higher levels. They would suggest that nature has in effect emulated agency causation. Life itself, and the theory of the emergent physical mind—property dualism—are examples materialists would point to as exhibiting agency that has arisen through deterministic causes interspersed with some occasional randomness. This exception to reductionism—emergence—is the idea that new qualities emerge that cannot be inferred or predicted from an examination of the lower levels.

Because nature abides by laws, nature’s ability to produce something that exhibits complex specified information, must involve stochastic (random) quality. This random quality serves as the input to a process that materialists claim can build complexity incrementally. Materialists believe they have discovered such a process related to the most complex things we observe—living organisms. The process of course is the tandem interworking proposed by Neo-Darwinism— random mutation and natural selection. A good deal of the focus of this paper will be in assessing whether this process is capable of producing the complexity that we see in living organisms.

Here is the very important thing to remember…The secret of materialism’s claim to be able to explain how complexity might arise through material causes stems from what scientists perceive to be the success of discovering a law of nature that can build complexity. That law of nature is natural selection. Natural selection, materialists claim, explains how life evolved including consciousness and higher level thought. Materialists acknowledge that natural selection cannot explain the origin of life (abiogenesis). But they are working on ways they can apply the principle of selection to do so. As you will see, they have not come close to doing that.

But natural selection can only build complexity, Neo-Darwinists would acknowledge—if it can build complexity at all—by an incremental process whereby a small random beneficial change occurs and is then locked in by natural selection. Stated another way, natural selection is not a sufficient material cause; it can at best be a necessary cause. Random mutation is the other necessary material cause. Together—random mutation and natural selection—materialists would claim, constitute a sufficient cause to explain complex specified information.

The fundamental problem with Neo-Darwinism according to Intelligent Design proponents—teleologists—is related primarily to random mutation. There are limitations of natural selection as well, but there are profound difficulties involved in the probabilities they point to related to random mutation. That Intelligent Design is focused almost exclusively on the mutation side of the Neo-Darwinian mechanism is commonly misunderstood.

The probabilities of a small, random, beneficial change occurring are far better than the probabilities of a large systemic beneficial change occurring because the probabilities of a large change are prohibitive. If you build complexity by small incremental changes and lock them in by natural selection at each step, the probabilities of each small step are added together to form a much lower overall probability of an entire system built over vast amounts of time. For a large systemic change that encompasses the equivalent of those several small random changes, to occur in one step, would mean that you multiply the probabilities of each distinct change required to achieve the probabilities of what would be a single large systemic change. The bottom line is that nature can make no leaps “Natura non facit saltus” given that they are limited to material causation.

What we will see is that not only is natural selection inadequate to create complexity in the evolution of life, but it is especially incapable of creating complexity insofar as the origin of life. But, it gets far worse for materialism. The aspect of reality that produces the greatest degree of complexity over the shortest period of time is in the mind of humans. Explaining why this is the case, will be the most important focus of the paper.

The origin of life is a separate study from Neo-Darwinism. The origin of life is recognized as “unsolved” but materialists invoke “promissory materialism” when confronted with such profound gaps in the explanatory narrative of nature’s ability to produce complexity.

Consciousness is also an unsolved problem but here again, materialists will tell us with the highest level of assurance, that they know that consciousness and all thought is reducible to brain chemistry.

Rest assured materialist scientists are busying themselves trying to show that these seemingly scientifically intractable problems—these apparent limitations to material causes that nature may seem to have, are not insurmountable. The goal of the scientific enterprise is to describe or model all reality in purely naturalistic terms. The confidence they have that all phenomenon will be explainable in purely naturalist terms seems to have arisen from what they believe to have been the crowning achievement of science in the twentieth century—the establishment of the Modern

Synthesis “Neo-Darwinism.” All other unsolved problems will eventually fall in line. The ultimate goal of many materialist scientists is to convince us all that they, and only they, are the experts and more importantly that eternal annihilation is our ultimate destiny.

3.3 Is the Universe Causally Closed?

Most materialists view reality—the universe—as a physically causally closed system that does not permit any outside influence. Classical physics seems to discount the possibility of an influence or interaction by some imagined immaterial agent or force. Classical physics was deterministic in theory and precluded the idea of free will and mind-brain interaction where mind is understood to be immaterial.

Therefore, materialists study reality under the assumption that natural laws govern all events, i.e. that there are no outside immaterial influences. This doctrinal exclusion of any outside immaterial forces is referred to as “Methodological Naturalism.” For some, methodological naturalism does not necessarily mean that other, nonmaterial influences may not exist but just that when conducting science they cannot be assumed to affect empirical research. Methodological naturalism started out being a method of inquiry but has evolved to a wholehearted acceptance of materialism for the vast majority of academic scientists.

Is the universe casually closed? William Hasker Cornell University philosopher of mind says this about the causal closure argument (as applied to substance dualism),

“The hoariest objection specifically to Cartesian dualism (but one still frequently taken as decisive) is that, because of the great disparity between mental and physical substances, causal interaction between them is unintelligible and impossible. This argument may well hold the all-time record for overrated objections to major philosophical positions.”

Tuffs University Philosopher Daniel Dennett uses the causal closure argument in his book, Consciousness Explained and regards it as decisive.

“No physical energy or mass is associated with them [signals from the mind to the brain]. How then do they make a difference to what happens in the brain cells they must affect, if the mind is to have any influence over the body?”

The problem with this statement is that it is based on classical “Newtonian” physics. Quantum mechanics, at least the most commonly accepted interpretation, the “Copenhagen Interpretation,” nullifies the deterministic and mechanistic view of the universe by showing that nature is inherently probabilistic. By extension, quantum physics is an enabling theory for an open universe and therefore offers a denial of the principle of the causal closure of the universe.

It should be noted that there are some interpretations of quantum mechanics that deny that nature is inherently probabilistic and view nature as deterministic nonetheless. The “Many Worlds” interpretation is one such example, but it is a minority (although growing) view.

Quantum physicist Henry Stapp notes that extending classical physics to the brain/mind would have our thoughts controlled “bottom-up” by the deterministic motion of particles and fields. Stapp comments on Dennett’s statement (above),

“Classical physics allows no mechanism for a “top-down” conscious influence…[and] there’s a quantum loophole in Dennett’s argument: No mass or energy is necessarily required to determine which of the set of possible states a [quantum] wave function will collapse upon observation.”

Note that the “quantum wave function” is discussed below. Stapp continues,

“In view of the turmoil that has engulfed philosophy during the three centuries since Newton’s successors cut the bond between mind and matter, the re-bonding achieved by physicists during the first half of the twentieth century must be seen as a momentous development.

“The only objections I know to applying the basic principles of orthodox contemporary physics to brain dynamics are, first, the forcefully expressed opinions of some non- physicists that the classical approximation provides an entirely adequate foundation for understanding mind-brain dynamics, in spite of quantum calculations that indicate just the opposite; and second, the opinions of some conservative physicists, who, apparently for philosophical reasons, contend that the successful orthodox quantum theory, which is intrinsically dualistic, should be replaced by a theory that re-converts human consciousness into a causally inert witness to the mindless dance of atoms, as it was in 1900. Neither of these opinions has any rational basis in contemporary physics.”

The claim of the Copenhagen interpretation is that the probabilistic nature of quantum mechanics is not a temporary feature which will eventually be replaced by a deterministic theory, but instead must be considered “a final renunciation of the classical idea of ‘causality.’" There is an intrinsic randomness associated with observations of micro particles such as photons, electrons, atoms and even larger particles and molecules behave as “probability waves.”

The probability wave can perhaps best be thought of as an unrealized potential that materializes in a random, i.e. probabilistic way. The probability wave is not really a physical wave, it is an abstraction, it just represents a known-probability distribution of the way a particle will materialize once observed. Probability waves “collapse” i.e. becomes distinct particles in a particular location when they are “observed.” And they collapse with an inherent randomness when observed. How does nature decide on a particular result? No one knows; nature gives only probability.

Here is how MIT physicists, Bruce Rosenblum and Fred Kuttner, summarize it in their book, the Quantum Enigma: Physics Encounters Consciousness:

“Quantum physics does not tell the probability of where an object is, but rather the probability that, if you look, you will observe the object at a particular place. The object has no “actual position” before that position is observed. In quantum mechanics the position of an object is not independent of its observation at that position. The observed cannot be separated from the observer.”

What constitutes an “observation?” It is a measurement or detection resulting from an interaction between a classical object (a large object) and a quantum object (a micro particle). A human observer does not appear to be necessary although some claim that a human observer is ultimately necessary. This is the subject of some heated debates.

There is an uncertainty inherent in the properties of all wave-like systems—Heisenberg Uncertainty principle—that arises in quantum mechanics simply due to the matter-wave nature of all quantum objects. It is a fundamental property of quantum systems. This fundamental property means that the universe may not be causally closed and further that there is no way for human observers to determine whether it is or not.

4 Complexity of Life

The first step in understanding why it is implausible to suppose that Neo-Darwinism or any other purely materialist theory of evolution can account for the complex specified information exhibited by living organisms, is to look at the staggering complexity of living organisms revealed by current research. We can then assess whether material causation can account for this complexity and if not ascribe it to agency causation—intelligent design.

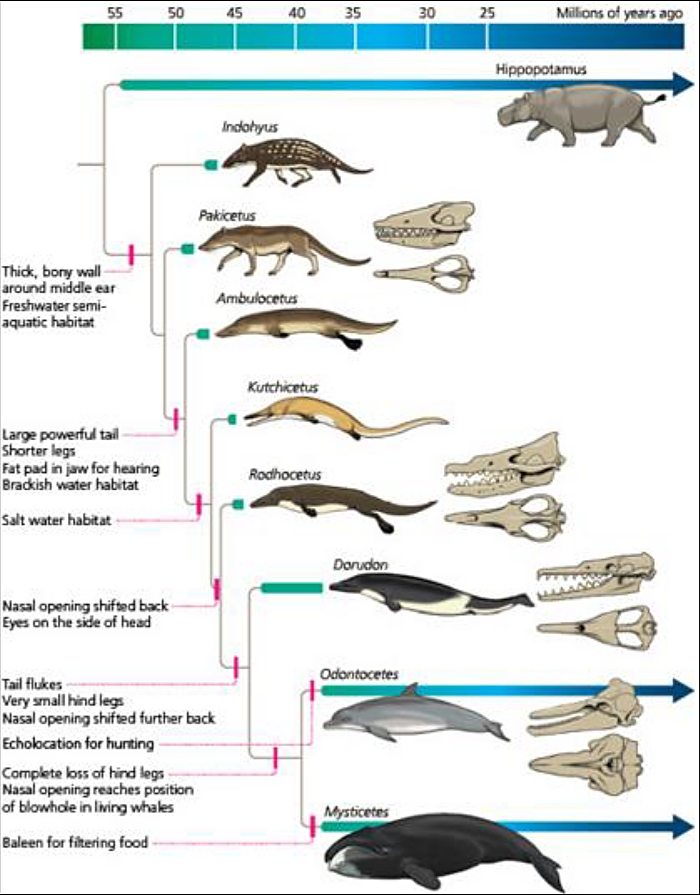

You will be hearing a lot about microbiologist Dr. James Shapiro in this paper, so let me introduce him here in full. From Wikipedia: “James Shapiro was elected to Phi Beta Kappa in 1963 and was a Marshall Scholar from 1964 to 1966. He won the Darwin Prize Visiting Professorship of the University of Edinburgh in 1993. In 1994, he was elected as a fellow of the American Association for the Advancement of Science for ‘innovative and creative interpretations of bacterial genetics and growth, especially the action of mobile genetic elements and the formation of bacterial colonies.’ And in 2001, he was made an honorary officer of the Order of the British Empire for his service to the Marshall Scholarship program. In 2014 he was chosen to give the 3rd annual ‘Nobel Prize Laureate - Robert G. Edwards’ lecture.”

Shapiro:

“The cell is a multilevel information-processing entity, and the genome is only a part of the entire interactive complex. They acquire information about external and internal conditions, transmit and process that information inside the cell, compute the appropriate biochemical or biomechanical response, and activate the molecules needed to execute that response.”

Bruce Alberts, president of the National Academy of Sciences:

“We have always underestimated cells. … The entire cell can be viewed as a factory that contains an elaborate network of interlocking assembly lines, each of which is composed of a set of large protein machines. … Why do we call the large protein assemblies that underlie cell function protein machines? Precisely because, like machines invented by humans to deal efficiently with the macroscopic world, these protein assemblies contain highly coordinated moving parts.”

Neither James Shapiro nor Bruce Alberts would claim that naturalistic process cannot account for these features of living organisms. But that is an assumption based on their materialist predisposition.

According to Intelligent Design proponent, medical doctor and molecular biologist Michael

Denton comments:

“Molecular biology has shown that even the simplest of all living systems on the earth today, bacterial cells, are exceedingly complex objects. Although the tiniest bacterial cells are incredibly small, weighing less than 10-12 gms, each is in effect a veritable micro-miniaturized factory containing thousands of exquisitely designed pieces of intricate molecular machinery, made up altogether of one hundred thousand million atoms, far more complicated than any machine built by man and absolutely without parallel in the nonliving world.”

4.1 Life Primer

Since all biologic functions require proteins to carry out all essential function, assessing complexity in terms of proteins is a good place to start. In order to understand the role of proteins it is helpful to understand how they work in living organisms. Neo-Darwinists make light of the verage person’s sense of incredulousness when observing the complexity of living things and expressing doubt about the theory. Making light of, or being dismissive about, the complexity of life is not an argument; it is a tactic they use to diffuse what is a real problem.

Please watch the videos at the links below which gives an overview of life’s essential processes (Skip to 1:15 mark and view till the 6:30 mark in the first video).

https://www.youtube.com/watch?v=Kzgnl5-8WAk

https://www.youtube.com/watch?v=yqESR7E4b_8&list=PLC6B226B216FA8FFD

The functions shown are carried out by complex protein and RNA assemblies and molecular machines—Helicase, DNA polymerase, RNA polymerase, Spliceosome, Ribosomes, etc.

Proteins are made up of a series of concatenated amino acids (‘residues” ). A typical protein will have 300 or more—often many more—amino acids in a string but some have less. The sequence of amino acids in a protein is somewhat specific, meaning that a few changes here and there will often render a protein non-functional, i.e. unable to fold properly and therefore unable to catalyze a reaction or serve as any sort of a useful structure. Proteins have various domains or subsets of functional segments that can be swapped and assembled like Legos.

The following subsections briefly discuss the complexity of some of the key molecular complexes you saw in the animations in terms of protein composition. These molecular complexes support the essential functions in living organisms. All the examples of molecular machines are subcellular, i.e. at the molecular level (except the eye).

I cannot possibly come close to covering but a small tip of the iceberg of the complexity of these marvelous molecular devices. By way of example, there is an entire 300 plus page book just on the Helicase—the molecular device that unwinds the DNA in preparation for DNA replication.

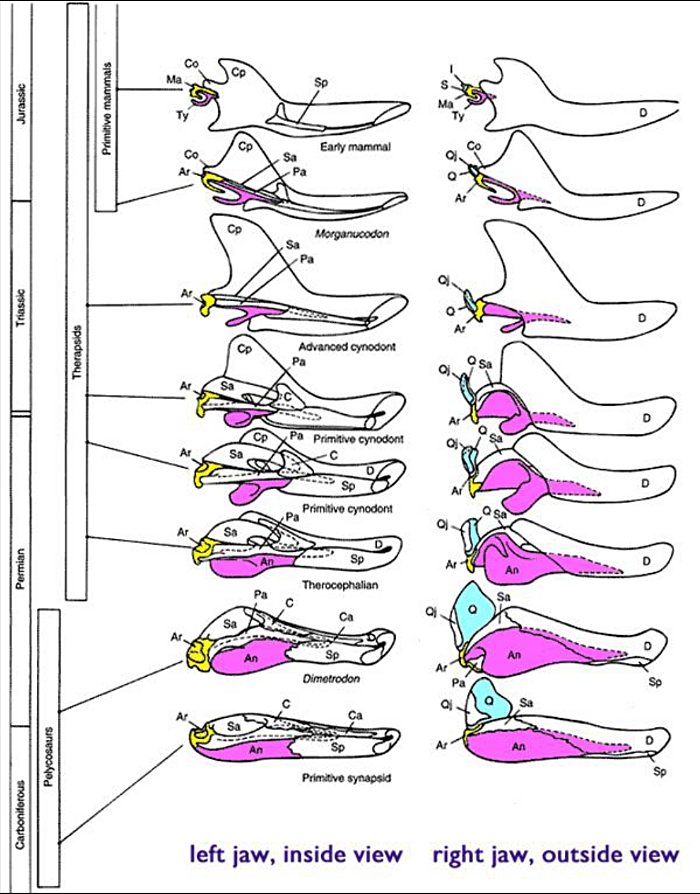

4.1.1 DNA Polymerase

DNA polymerase is the protein complex that is most central in DNA replication. A cell copies its DNA every cycle. Without the ability to copy DNA reliably there would be no life. There are a variety of types of DNA polymerase depending on the type of organism and they even vary depending on cell type. These protein complexes work in pairs to create two identical DNA strands from a single original double stranded DNA molecule.

DNA polymerase works with the Helicase and a variety of other protein complexes during the replication process. DNA polymerase synthesizes new strands of DNA at a rate of about 749 nucleotides per second. The error rate during replication is believed to be in the range of 10-7 and 10-8, based on studies of E. coli and bacteriophage DNA replication. There is a proof reading and error correction feature built into the DNA polymerase protein complex.

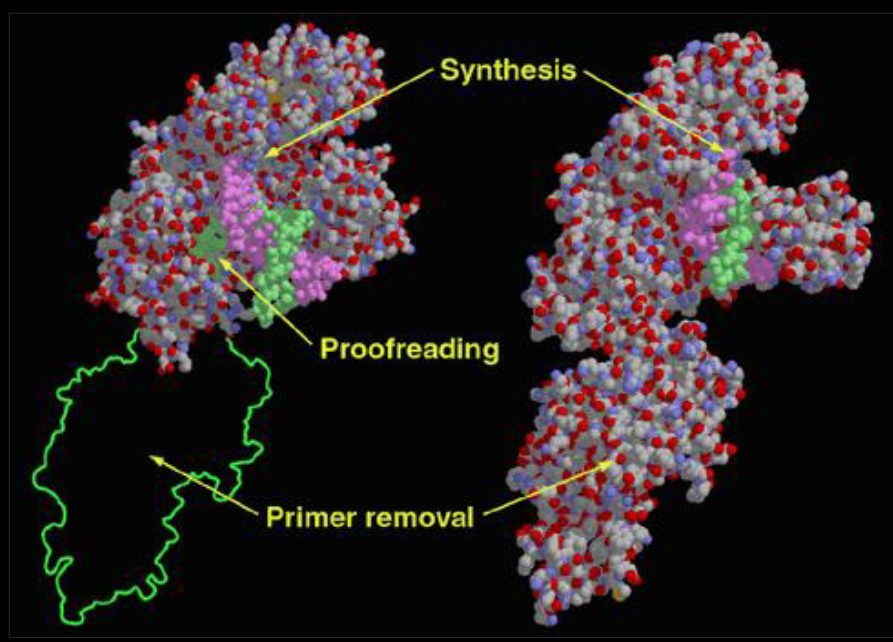

The DNA polymerase is composed of two protein domains, a polymerase domain and a proofreading domain. The polymerase domain is composed of three subdomains. There are perhaps as many as fifteen (15) different genes that produce DNA polymerase protein machine.

4.1.2 DNA Helicase

In order for DNA Polymerase to replicate the DNA, the DNA has to be unzipped by a molecular machine called the DNA Helicase. There are other types of Helicases that facilitate the variety of metabolic processes related to RNA such as translation, transcription, ribosome biogenesis, RNA splicing, RNA transport, RNA editing, and RNA degradation. The DNA Helicase moves along in front of what is called the “replication fork” (the splitting of the double stranded DNA) which enables DNA replication. The DNA Helicase continuously opens and unwinds the DNA double helix with a rotational speed of up to 10,000 rotations per minute, which rivals the rotational speed of jet engine turbines. Here is an image of the DNA Helicase.

The DNA Helicase is very complex. There is an entire 400 page text book dedicated to describing its structures and functions.

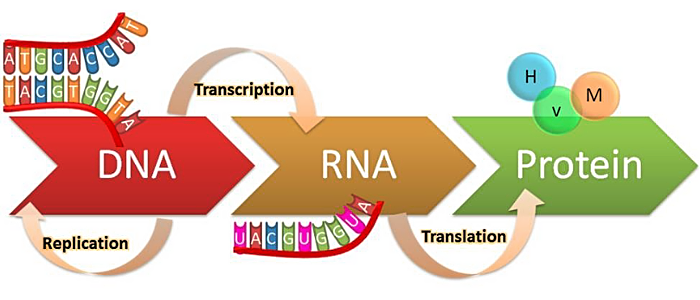

4.1.3 RNA Transcription – Make RNA from DNA - RNA Polymerize

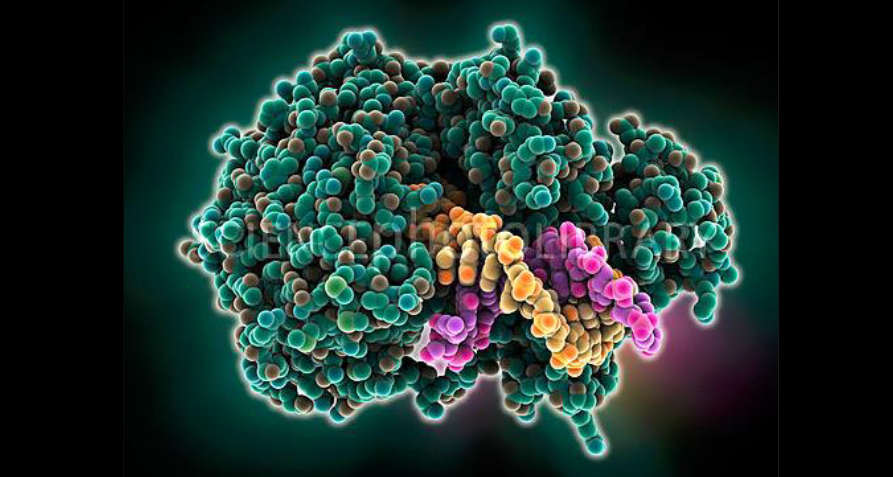

The RNA Polymerase copies one strand of the DNA double helix into mRNA in a process called transcription along with several other molecules which are collectively referred to as the “Transcription Initiation Complex.” The RNA Polymerase complex is composed of twelve (12) protein subunits for a combined 3000 amino acids. There are two large complexes and the rest are relatively small and unique to each type of polymerase. RNA polymerase transcribes the DNA at a rate of about 50 bases per second. A typical mRNA that codes for an average protein takes about 20 seconds in a prokaryotic cell and about 3 minutes in a eukaryotic cell to transcribe. Watch the animation at the link below to see this marvelous molecular machine in action.

https://www.youtube.com/watch?v=SMtWvDbfHLo

4.1.4 RNA Splicing – Making RNA Transcripts - Spliceosome

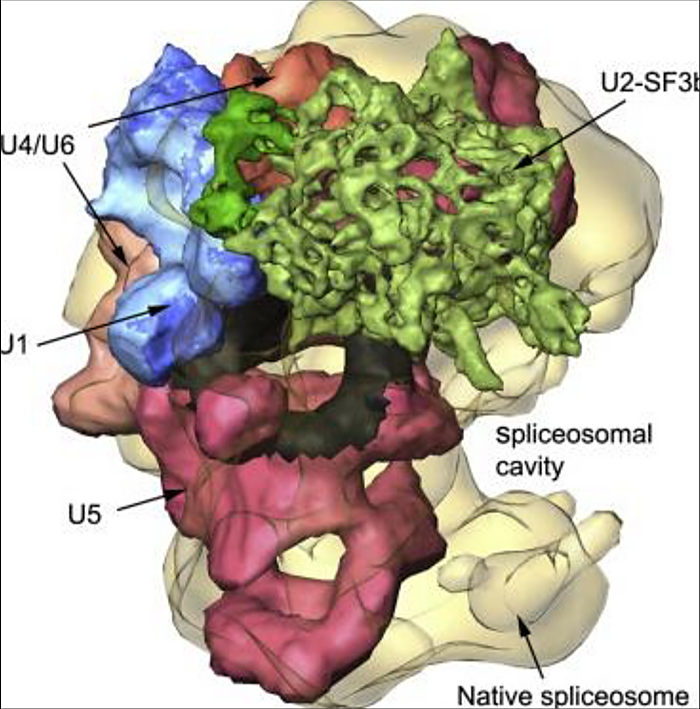

The spliceosome is perhaps the most remarkable molecular machines in the cell. The spliceosome has been described as one of "the most complex macromolecular machines known, composed of as many as 300 distinct proteins and five RNAs". The small RNAs in each subunit are typically about 100 to 300 nucleotide base pairs long.

Watch the animations at the link below to see this marvelous molecular machine in action. The animation above reveals this astonishing machine at work on the initial transcript mRNA. When genes are transcribed from DNA, an mRNA is produced. But the protein coding areas “exons” of the initial transcript are separate by long stretches of non-coding regions called “introns.” Introns are typically 80 to 90 percent of a raw transcribed mRNA from DNA. These introns need to be removed in a process called splicing. The spliceosome cuts out these non-coding regions and rejoins the exons, i.e. the protein-coding segments.

https://www.youtube.com/watch?v=aVgwr0QpYNE

and here:

https://www.youtube.com/watch?v=tijanyUbY1A

and here:

https://www.youtube.com/watch?v=7DokEF-kxaI

and here:

https://www.youtube.com/watch?v=YgmoHtLGb5c

4.1.5 Ribosome Translation – Make Proteins from RNA

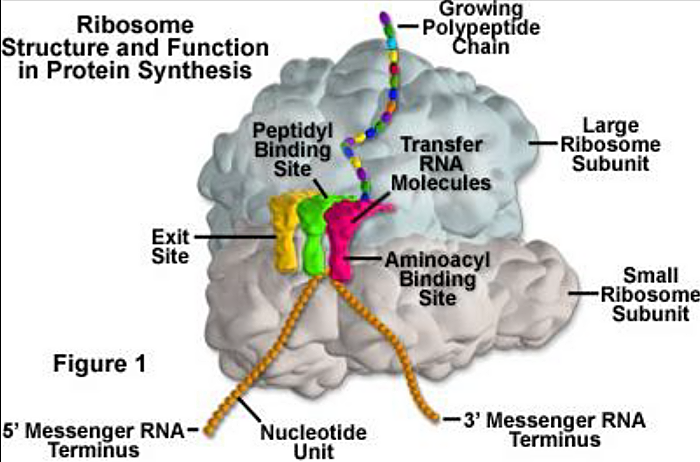

The Ribosome is highly complex molecular machine use to synthesize proteins from mRNA following transcription and splicing. The process of protein synthesis is called “translation.” The ribosome, along with a variety of other associated molecules, concatenates amino acids together as they are fed to it from distinct transfer RNAs which carry each amino acid to the ribosome. The ribosome contains about eighty (80) distinct proteins and a variety of different RNAs. So the ribosome manufacturers proteins and is itself composed of many proteins.

The following is a link to an animation of the ribosome in action:

https://www.youtube.com/watch?v=TfYf_rPWUdY

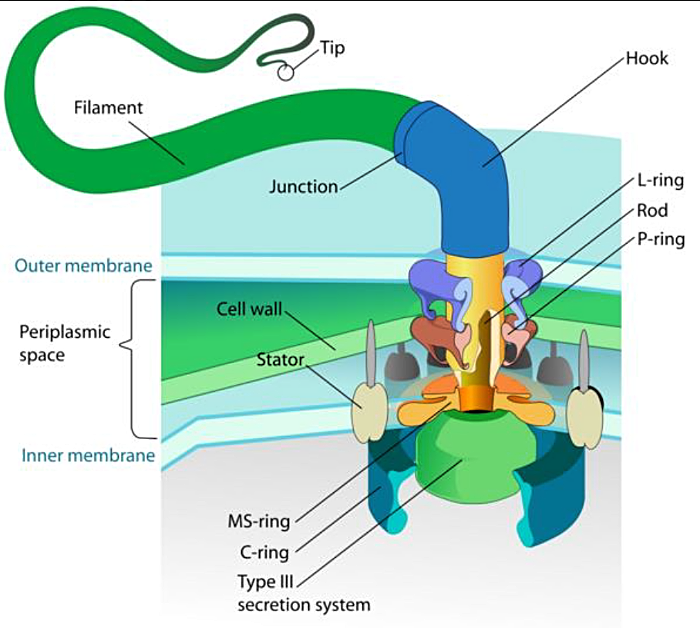

4.1.6 Bacteria Flagellum

Molecular biologist, and Intelligent Design proponent, Michael Behe Lehigh University advanced the concept of “Irreducible Complexity” in his ground breaking 1996 book, Darwin’s Black Box. Irreducible Complexity simply means that in a system with many components, if it is the case that some, or many, most or all of these components are essential for the system to perform its function, the system is said to be “irreducibly complex.” In other words, you cannot reduce its complexity beyond a certain point without destroying its function. By extension, this means that there is no way to build such an irreducibly complex system incrementally through Neo-Darwinian mechanisms.

In all molecular machines there are many essential components due to the interdependencies between components. You can demonstrate irreducible complexity by conducting what are called knock-out experiments. Knock out experiments involve disabling a gene for a specific protein in the molecular machine to determine if the protein produced by that gene is essential to build the molecular machine or to allow it to perform its function. Irreducible complexity is a specific instance, for example, of complex specified information as it applies to living organisms.

One of the examples Behe used in his book was the bacterial flagellum. The flagellum is composed of about forty (40) or more proteins all of which are essential based on “knock out” experiments. For a detailed description the flagellum and its operation refer to:

https://www.youtube.com/watch?v=_u_LYJGDopA

To counter the idea of irreducible complexity, supporters of Neo-Darwinism offer the idea of “cooption” or exaptation which claims that perhaps some of these components in the flagellum, evolved to carry out some other function. The genes were then duplicated and mutated and then coopted (reused) and gradually integrated to produce the new function—the flagellum.

More specifically they point out that ten (10) of the genes that code for proteins of the flagellum are also present in another molecular machine—the Type III secretory system—in the bacteria (TTSS). The TTSS pump transports proteins across the cell membrane of bacteria. Therefore, it is possible that the TTSS system evolved and some of its genes (all ten perhaps) were duplicated and coopted for the flagellum.

There are a few problems with this cooption theory. First off the flagellum has perhaps has many as forty (40) proteins, so you would still need to explain where the other thirty (30) came from.

Secondly it appears that the flagellum arose prior to the TTSS system and therefore the flagellum could not have coopted the TTSS systems proteins. Third, even if the TTSS system evolved first and its ten genes were coopted, you would still need to explain the TTSS system with its ten (10) new genes.

With this type of story-telling using the TTSS system in the case of the flagellum, Neo-Darwinists have pretty much declared victory, claimed irreducible complexity to have been debunked and moved on. In fact if you were to google “irreducible complexity” you would no doubt see many articles claiming that irreducible complexity has been debunked.

When Michael Behe’s book came out, James Shapiro declared in National Review that:

"There are no detailed Darwinian accounts for the evolution of any fundamental biochemical or cellular system, only a variety of wishful speculations."

The whole debate has been a long, detailed, drawn-out debate with nasty edge to it. My view is that the concept of irreducible complexity has not been debunked at all—not even close. I find the explanations offered by Neo-Darwinists to be cartoonish. That irreducible complexity has not been debunked is no doubt what renowned atheist philosopher Thomas Nagel was referring to in his book, Mind and Cosmos: Why the Materialist Neo-Darwinian Conception of Nature Is Almost Certainly False when he said:

“Those who have seriously criticized these arguments [primarily the argument for irreducible complexity] have certainly shown that there are ways to resist the design conclusion; but the general force of the negative part of the intelligent design position—skepticism about the likelihood of the orthodox reductive view, given the available evidence—does not appear to me to have been destroyed in these exchanges.”

For a good overview of the debate without a lot of the typical nastiness, refer the following video (view to the 20:10 mark):

https://www.youtube.com/watch?v=F4uJ6y5Y29g

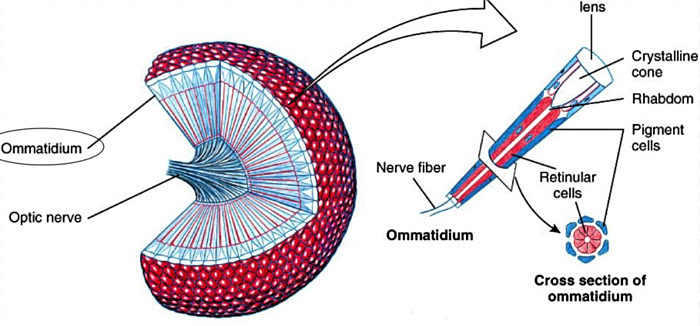

4.1.7 Eye

Thus far we have been looking at complexity at the molecular machine level. These devices are all subcellular. And many of the functions these molecular machines perform must have been in place at some level in the very earliest cells. I wanted to now take a look at a multicellular organ—the eye.

In the Cambrian Explosion we see a vast array of complex new animal body plans with complex new features such as the eye. When looking at the complexity of a particular adaptation—an organ—such as the eye, the best way to think about it is to look at the number of new cell types, i.e. tissues, and then also, if possible, how many new proteins would be required for each new cell type. Ultimately what we need to know is how many new proteins (and hence genes to define them) are required for a new complex feature. This will give us a rough sense at the complexity involved and the probabilities involved. This is difficult to do, but we can make an educated guess. But the educated guess would have to be based on the ratio of sequences of DNA that yield a viable protein vs the overall set of possible DNA sequences.

The Neo-Darwinian accounting of the evolution of the eye is really not evidence, but rather a collection of imaginative stories. What science there is, is based on the most simplistic high level description of how the eye might have evolved at the gross anatomical level.

Trilobites appeared as part of the Cambrian explosion. The trilobite eye is “very similar to the structure seen in the eyes of today's horseshoe crabs.”

http://news.sciencemag.org/paleontology/2013/03/looking-trilobite-eye

From the diagram below you can see that there are at least seven cell types in the compound eye and they all have to fit together. It is not clear how many new proteins there are in each cell type of the compound eye. Clearly though, many new proteins would be necessary.

Also, an eye would have no value at all without a way to transmit the signal to the brain. And the brain would have to be equipped with a way to process the signal to do something related to survival, that being a requirement of evolution by natural selection.

And the information content expressed as the number of new proteins (an their complexity) is one thing, but this is to say nothing about how all the various types of cells that make up the eye are arranged symmetrically and in a way to allow the eye to function.

Moreover, what is often glossed over in Neo-Darwinian explanations of the eye is that the paleontological record of the Cambrian for example, indicates that there were many new animals and each new animal had a variety of new complex adaptations ranging from digestion, to locomotion to the senses including the eye. It is hard enough to imagine how these various features could have been culled out piece by piece through mutation and selection but how natural selection acts on multiple disparate nascent features is a further problem that is unresolved.

4.2 Protein Sampling Problem

Clearly there are many proteins involved in the essential functions in living organisms. And in order to make these proteins many other proteins are required. It is a massive chicken and egg problem. But how would one gain a sense whether or not a process like Neo-Darwinism could create these marvelous molecular devices and features? How could you quantify the complex specified information content?

Since proteins are required for any biologic function, one way to gain at least some insight into the scope of the problem would be to determine how plausible (or not) it is for nature to find viable proteins by chance, i.e. how rare are they? To do this one would need to assess the ratio of DNA sequences that code for viable proteins to the DNA sequences that do not code for a viable protein. It is analogous to asking: What is the ratio of the number sequences of say 300 human text words that produce a meaningful sentence, versus those sequences of text words that are unintelligible or gibberish.

The problem involved in a random search through a large “space” of possible molecular sequences to find a viable molecular sequence is often referred to as the “protein sampling problem.” It is fundamentally an information problem—a complex specified information problem in fact. Neo-Darwinists do their best to ignore it by claiming the powers of natural selection enable the process to skirt around any sort of improbabilities critics might throw their way. But Neo- Darwinism is a tandem process with two necessary causes—mutation and selection. You need the raw material of change (mutation) prior to selection in the first place that produces a functional protein. Furthermore, the process needs to discover viable DNA sequences that lead to viable proteins at each step along the way otherwise they could not be selected.

Assessing the impact of the protein sampling problem is not an easy problem given the size of protein space (number of combinations, 20300 assuming most proteins have about 300 amino acids [there are 20 different types of amino acids in living systems]), but there have been estimates. Mathematician Hubert Yockey found that the probability of evolution finding the cytochrome-c protein sequence which is only 150 amino acids (residues) is about one in 1090. To put that number in perspective, a target the size of a grain of sand in the Sahara amounts to about one part in 1020.

But this is a target for a specific protein albeit a protein of modest size. What about the ratio of viable DNA-protein sequences vs inviable DNA-protein sequences assuming any function at all?

In the early 1990s, Robert Sauer professor biology at MIT, made an estimate on a protein of 92 residues (that is a small protein, most are about 300 amino acids, some are 1000 amino acids). Sauer calculated that the ratio of DNA sequences leading to viable proteins to those yielding no viable proteins to be 1 in 1064.

Biologist Doug Axe working at Cambridge University conducted the most recent study in 2004. Dr. Axe’s study has been well-documented in Stephen Meyer’s books Signature in the Cell and Doubting Darwin. Doug Axe and Stephen Meyer are Intelligent Design proponents. Axe conducted a study to determine the significance of the sampling problem for a protein with 150 amino acids.

The outcome of his research was that the ratio of viable to inviable DNA sequences is 1 in 1077. In other words, for every DNA sequence that yields a viable protein, there are roughly 1077 DNA sequences that do not. Obviously for a larger (and more realistic) protein with 300 or more amino acids, the probabilities related to the sampling problem diminish significantly—become much less probable.

Most functions in organism require multiple proteins aggregated together to form a protein molecular machine or complex. We saw multiple protein complexes and machines are required for any essential cellular function. This means that you would add the exponents (the 77 in 1077) for the probability of each protein for any particular molecular device and for all the molecular

devices which work together to achieve a particular function such as duplicating the DNA. Adding all the exponents of all the proteins necessary to carry out any biologic function takes what is an extraordinarily daunting problem in probabilities to one that is hopelessly implausible.

It is important to note that typically only about 50% of the amino acids in a protein have to be precisely what they are in order for a protein to support a function. In other words the degree of specificity is about 50%. The calculations above account for that.

To put the number 1 in 1077 in perspective, there are only 1065 atoms in the Milky Way. Scientists have estimated that a total of about 1030 organisms that have lived on earth since the origin of life and that there have been a maximum number of about 1043 trials, i.e. mutational attempts in all the genomes of all the organisms that have ever lived. Yet the Cambrian explosion, which is the period throughout which most animal body plans appeared, allows for only about 20 million years. (There is some debate about that, but were one to take the fossil record at face value, these creatures appeared instantly.)

Clearly, if this research holds up—if it is really the case that DNA sequences that yield viable proteins is so sparse in sequence space—there is no plausible way that any of the necessary proteins in life could have arisen.

But there is one other possibility. Imagine a vast circle representing the space of all possible sequences of DNA for say 300 amino acids. That is an enormous space: That’s 20300 (there are 20 amino acids, i.e. 20 sets of 3 DNA base pairs that code for these amino acids). Then imagine inside that circle, many dots each representing a family of DNA sequences yielding viable proteins. The collection of dots would represent roughly 1/1077 of the space within the overall sequence space that has to be sampled, i.e. searched through. The question is, how tightly located are these viable sequences in the overall vast possible space? If they are tightly located, it is at least possible that, by a stroke of luck, nature could have hit the jackpot and found this isolated section of rich DNA- protein sequence space. From there, incremental changes could have, some would argue, navigate around to the others.

Doug Axe looked at this possibility and found that that is not the case. Proteins do not appear to be huddled together in a small sector in the vast sequence space where a lucky strike might yield a gold rush of proteins. The DNA sequences coding for viable proteins are dispersed throughout the sequence space. Therefore navigating from one viable sequence to another is highly improbable.

The depiction below shows several dots in a circle. In the analogy, the dots are the DNA sequences that code for viable proteins. The circle—all the empty space—is all the potential DNA sequences that yield nothing. The diagram is of course, just a depiction and not intended to reflect reality. The vastness of the nothingness is grossly understated given the limitations of computer graphics.

Note that the dots are dispersed. This means that the DNA sequences between the large families of protein folds are very different. Finding one does not mean you have hit a lucky strike and with a few changes in the DNA sequence could find the rest. In other words, areas of viable proteins are dispersed and could not be traversed by Neo-Darwinian mechanisms because there are vast areas of nothingness where no set of incremental steps—which have to be functional if Neo-Darwinism is true—could be taken to get from one dot the next.

Darwinists attempt to counter these poor probabilities by reducing the number of amino acids required in early proteins and assuming less specificity. And as always they invoke the magical mystical powers of natural selection. But selection is of little help if you cannot navigate from one viable protein to the next without a smooth pathway where each step is useful—more useful than the previous. And it appears to be the case that there is no smooth pathway from protein to protein throughout sequence space.

4.3 “Codes”

Another aspect of complexity in living systems is the existence of codes. There are no codes that are known to have been produced by natural processes. Neo-Darwinists of course claim that the DNA transcription-translation code to make proteins from DNA was created by natural processes. But this is a supposition based on materialism. You have heard about the DNA code. But there is actually more than one code. I will first discuss the DNA Transcription-Translation code since it is the best understood and well-known.

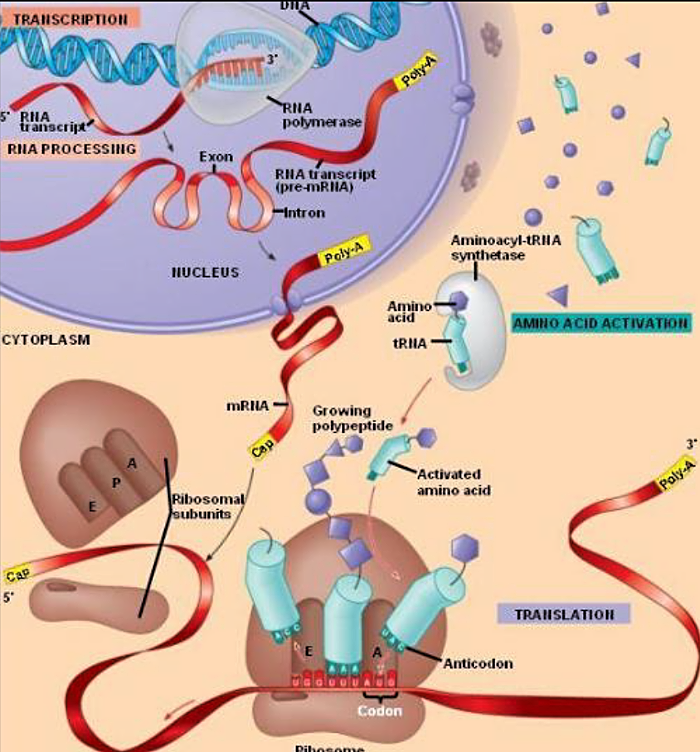

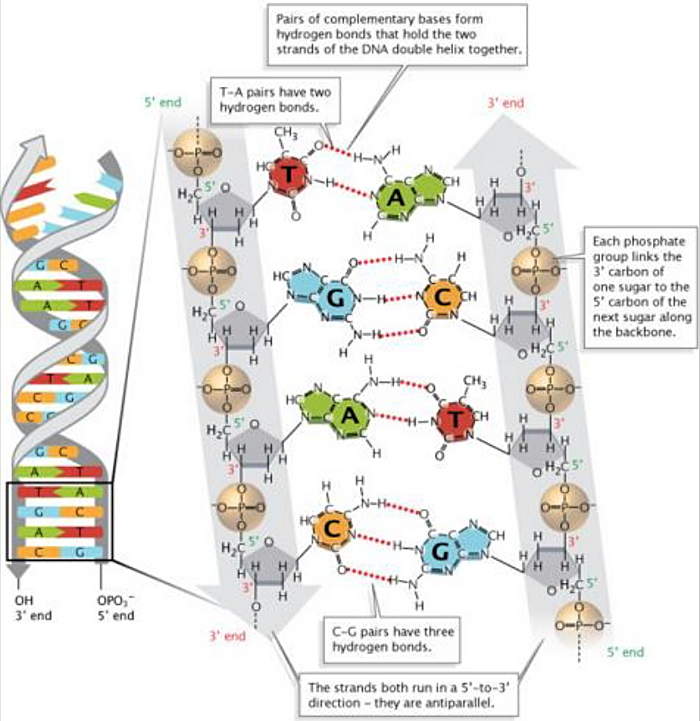

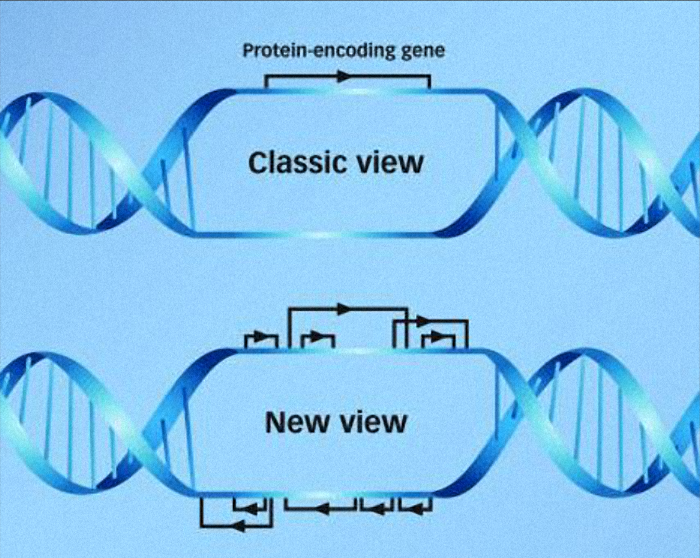

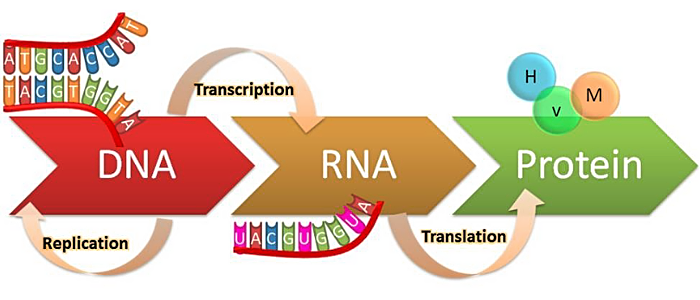

4.3.1 DNA Transcription-Translation Code

What is the DNA transcription-translation code? The DNA transcription-translation code is a way that the information in DNA first transcribed into RNA and then translated to make proteins. It is the most essential process in all of biology. It is important to note that this is a code and not a template. There is a difference. A code involves an intermediary that allows for an unconstrained, one might say arbitrary, arrangement of things. In this case these “things” are the amino acids whose sequence defines a particular protein. Proteins, you recall, do all the essential work in the cell and form the structures. A template would be a case where the physical structure of the DNA itself directly defined the arrangement of protein components (amino acids).

In the diagram below, if you look intently enough you will see that there is a transfer RNA molecule carries an amino acid on one end and matches a set of three bases to the messenger RNA in the protein assembly molecular machine called the ribosome. So the sequences in the DNA indirectly create the sequences in the protein. It is a lot like a language. There are 4 blocks that form 20 different associations in groups of threes called codons which then map and match to a similar set of 3 components on the transfer RNAs. These 3 components define the 20 amino acids that are used in the concatenation of similar elements that form the proteins.

So the DNA transcription-translation code is a code that is of course copied during DNA replication and is therefore involved in DNA inheritance.

But there are other “codes” some of which strike more as templates.

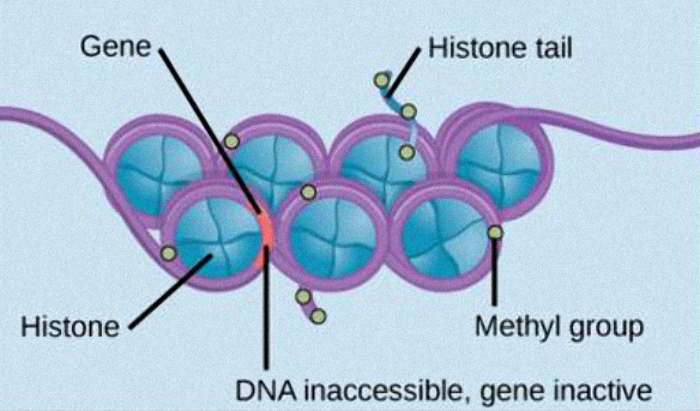

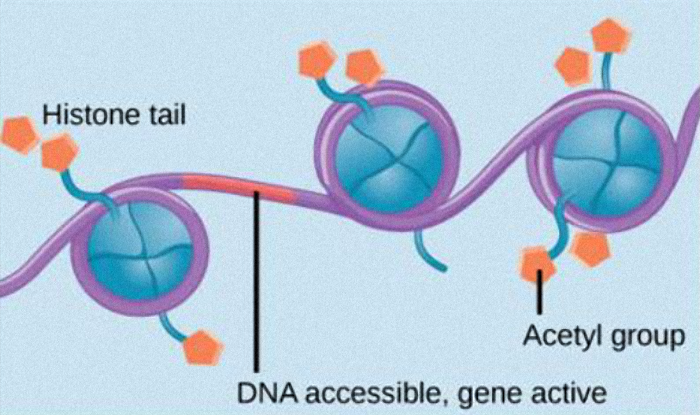

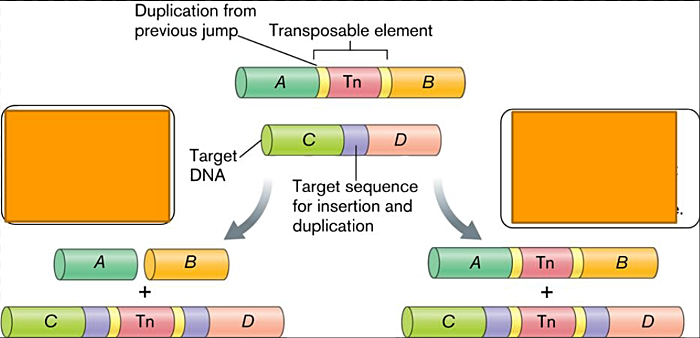

4.3.2 Epi-Genetic Code

I will talk more about epigenetics in the following section. But for now I will only say that there is a code, an “epigenetic code” that involves different types of modifications that can be applied to the DNA molecule which act to inhibit the transcription (the making of a messenger RNA) of a particular sequence of DNA. This inhibiting of transcription disallows that sequence from being translated into proteins.

4.3.3 Sugar Code

The sugar code or glycocode which is not part of the DNA, appears to play an important role in the development of multicellular animals. According to Intelligent Design developmental biologist, Jonathan Wells, “surface glycans in early mouse embryos change in a highly ordered and stage specific manner. The research shows that they mediate cellular orientation, migration, and responses to regulatory factors during development.”

The sugar code is determined by a complex set of patterns in the sugar molecules on the cell surface—the cell membrane. These sugar molecules can attached to lipids or proteins. Because of the complexity of the sugar molecule and the various forms that these molecules can take, the sugar molecules can carry high amounts of information on the cell membrane surface. In fact by some estimates the glycocode can convey far more information than the genome itself.

The sugar code is interpreted by proteins called lectins. “These lectins associate specifically with three dimensional structures of other molecules.” The information in the sugars attached to the cell membrane appear to affect cell to cell communication and how cells interact with one another during development.

4.3.4 Membrane Code

There are also patterns of the three dimensional arrangements of membrane-associated proteins, lipids and carbohydrates in the cell membrane that appear to play a role in the development of multi-cellular animals prior to the initiation of the genetic regulatory networks. The Membrane code, as Jonathan Wells calls it, “determines the spatial gradient for things in the cell.” They do this by providing targets and sources for intracellular transport and signaling.

4.3.5 “Endogenous” Electric Code

Perhaps the most interesting “code” are the electric fields, although code might not be the proper term. “Endogenous” means that they are generated from within. Electric fields emanate from the cell membrane during the embryonic stages of development and appear to provide information important to the spatial layout of a developing animal. The inside of every living cell is electrically negative with respect to its external environment.

That electric fields are involved in the development of multi-cellular animals is evidenced by the fact that perturbations of these fields result in abnormalities in, or a halting of, the developmental process.

Please view the video at the link below. It shows that electric fields—bioelectric signals—cause groups of cells to form patterns marked by differing membrane voltage levels. When a developing frog embryo is stained with a dye, the negatively charged areas shine brightly while other areas appear darker creating an "electric face."

https://www.youtube.com/watch?v=ndFe5CaDTlI

4.3.6 Summary of Codes

There are a couple of important general points to be made about these codes. These codes—like all codes—have a source and a target—a coder and a decoder. This means that there is a reciprocal dependency. There would be no point to an encoding structure without the ability to decode. As we learned in the section in Complex Specified Information, the quantity of complexity is determined in part by dependencies and especially interdependencies. There is an obvious interdependency in a code by virtue of the need for an encoder and a decoder.

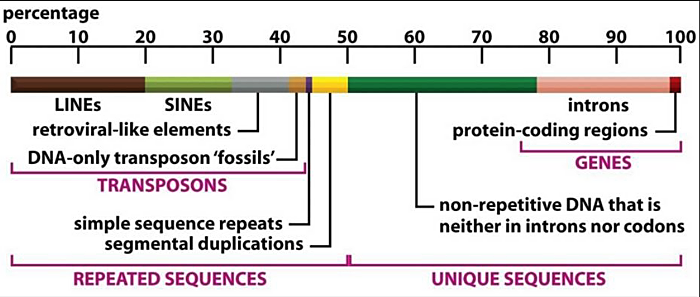

Moreover, importantly, and this is a controversial and contentious topic …since these other codes are involved in the defining the form of a multi-cellular animal during development and yet are not specified in the DNA they cannot therefore be part of the Neo-Darwinian process.

Therefore, it would appear that there is good evidence, despite the outcry from ultra-Darwinists, that the DNA gene regulatory networks (genetic algorithms or programs), once hailed as the secret as to how an animal is given its form (because there was no alternative), does not completely specify the form of an animal. This represents another unwelcome surprise for Neo-Darwinism. The subject is still somewhat unresolved, but the preponderance of evidence seems to suggest that the DNA does not entirely specify the form of an animal.

Although it is true that DNA specifies the materials of a cell including all the components involved in these other codes, nevertheless, due to RNA splicing and editing processes that occur after transcription of RNA from DNA but before translation of proteins, DNA sequences do not fully specify the final functional forms of most membrane components. According to Jonathan Wells, “these networks must be localized in spatial domains for the embryo to differentiate into various cell types and organs, and those domains must be spatially ordered with respect to each other for the organism to develop its proper morphology.”

Furthermore, the complexity of the information associated with the cell membrane that appears to account for some important architectural aspects of form of multi-cellular animals, is extraordinarily complex. And it is likely to be very specific. Therefore random changes to the information in the membrane are unlikely to be something that a purely naturalistic account of the evolution of life could subsume and is therefore unlikely to serve as a supplement for materialism as the Neo-Darwinian explanation falters.

As an interesting aside: You might occasionally hear of an Intelligent Design scientist who questions even Darwin’s “fact of evolution” i.e. common descent. And because of that you might be tempted to dismiss everything that they are saying. However, one should know that the reasons for this reluctance to accept common descent are because of this inconvenient finding (the finding that the form of an animal appears not to be entirely determined by the DNA) and also because of the number of new genes (“Orphan Genes” ) in each new species. This second point I will address in the next section. Epigenetics and horizontal gene transfer (also discussed in the next section) are other viable reason for questioning the doctrine of common descent. My feeling is that the truth or falseness of common descent is irrelevant to the question of design in nature—teleology.

4.4 Summary – Complexity of Life

There are many details I have glossed over in this section. The effect of omitting these details is to have made Neo-Darwinism seem more plausible than it really is. For example, I have omitted any discussion of energy requirements for these molecular machines to do their work and I have not discussed the fact that proteins, in order to do anything, have to be folded in a precise three dimensional way. In most cases proteins require another category of proteins called chaperones to do this. Some have claimed that if the protein folding process were entirely random, in other words not determined by physics and chemistry and unaided by chaperone proteins, that even a single 100 residue protein could not find its functional conformation by chance given the entire history ofthe universe.

Living systems clearly exhibit massive amounts of complex specified information and, it appears, not all of it is stored in the DNA. Materialists claim that this complexity can be assembled by emulating agency causation in place of true agency-design-through natural selection along with random mutation. The next few sections of this paper evaluate the viability of that claim.

5 Origin of Life (Abiogenesis)